The compliance team spent four months preparing for their ISO 42001 gap assessment. They built the governance documentation, drafted the responsible AI policy, and catalogued the AI tools their organization had approved.

On day one of the assessment, the assessor reviewed the inventory, set it aside, and asked one question:

Organizations building a comprehensive security program should review the NIST Cybersecurity Framework 2.0 for its updated Govern function and expanded guidance on supply chain risk—the two most significant additions in the 2024 revision.

A mature cybersecurity posture integrates penetration testing services with regular vulnerability assessments to maintain a continuously validated security baseline rather than relying on point-in-time snapshots.

'Are these all your AI systems?'

Nobody in the room was sure.

That moment is Clause 6.2.2. And it stops more ISO 42001 implementations than any other single requirement in the standard.

When InterSec built its own AI Management System and went through ISO 42001:2023 certification, the Clause 6.2.2 inventory exercise was the first place the gap showed up. Tools deployed by individual teams. AI services accessed through personal accounts. Automation workflows built without IT visibility. The approved inventory and the actual inventory were not the same list.

ISO 42001 Clause 6.2.2 requires organizations to maintain a complete inventory of all AI systems within the scope of the management system. Not just the enterprise-approved systems. Not just the ones IT procured. Every AI system operating inside your scope.

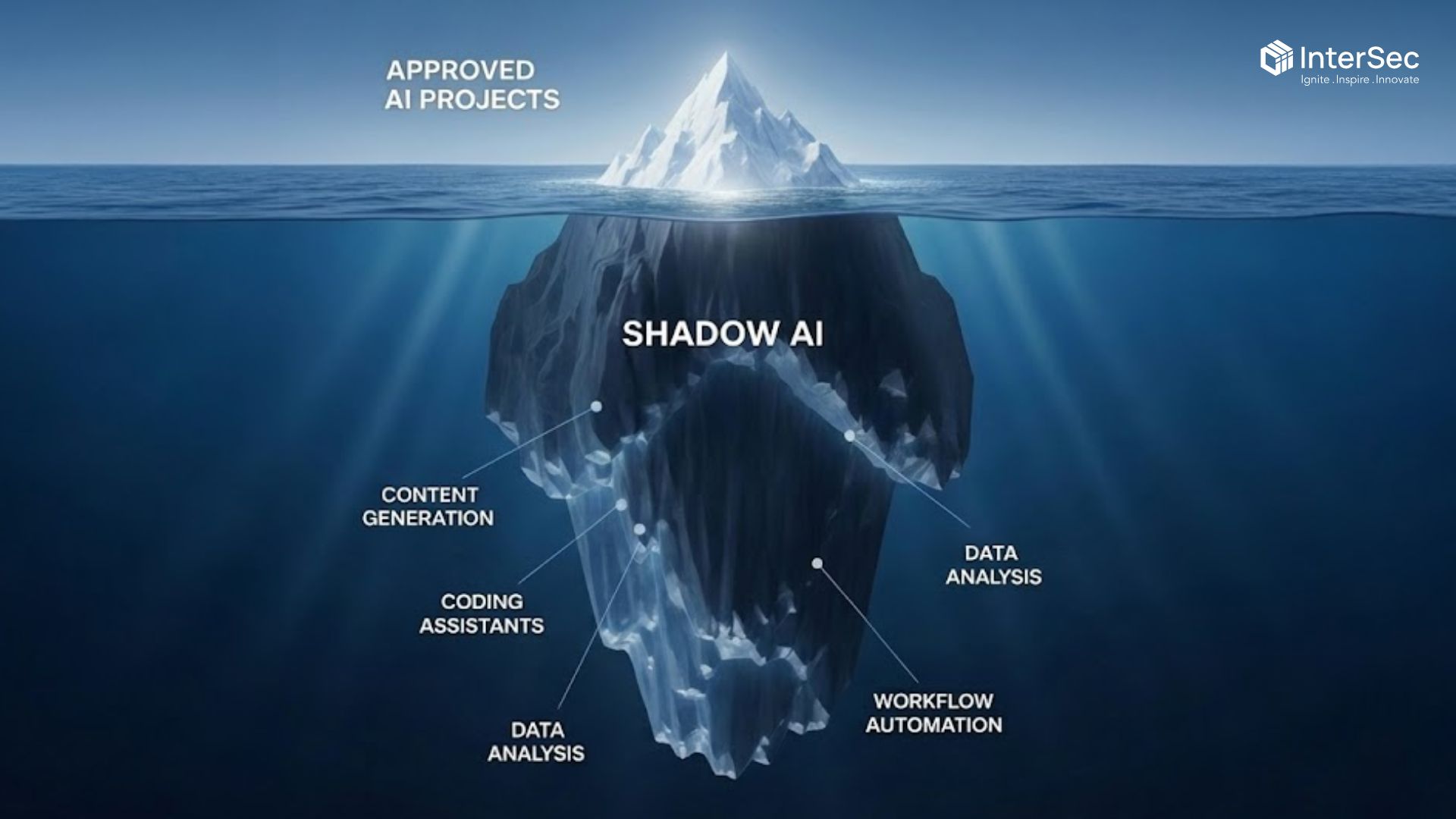

Shadow AI is the deployment of AI tools and services without organizational approval or governance oversight. It includes AI tools adopted by individual employees, services purchased through departmental budgets that bypassed procurement, and automation built using consumer AI products connected to internal systems. Shadow AI is not a hypothetical risk. It is present in most organizations running any meaningful AI footprint.

You cannot govern what you have not found.

Clause 6.2.2 does not ask whether your documented AI systems are governed. It asks whether your inventory is complete. An assessor reviewing Clause 6.2.2 evidence will flag every system that appears in the operating environment but not in the inventory. The gap between what an organization documents and what actually runs is where most implementations fail this clause.

Enterprise AI procurement processes were built for software that IT buys. Most Shadow AI does not arrive through IT. It arrives when a product manager uses an AI writing tool to draft customer communications, when an engineer connects a third-party LLM API to an internal workflow, when a sales team runs customer data through an AI scoring tool they found and expensed themselves.

None of those tools show up in a survey. A survey asks people what they use. It does not find tools that employees do not think of as AI, tools they adopted informally and never documented, or services they access through personal accounts on company hardware.

Think of your approved AI inventory as the visible portion of a much larger operating environment. The tools your governance team knows about are the ones that went through procurement. The tools that did not go through procurement are operating in the same environment, accessing the same data, and creating the same compliance obligations. The assessor examining Clause 6.2.2 does not distinguish between them. A system is either in scope or it is not.

This is a visibility problem. It requires technical discovery, not a policy update. Policies govern tools you know about. They have no effect on tools that are not in the inventory.

ISO 42001 provides the management system structure. It defines that your organization governs AI, through policies, leadership commitment, internal audit processes, and continuous improvement cycles. When an assessor asks for evidence, the management system produces the audit trail.

NIST AI RMF provides the operational approach to risk management inside that structure. Its four functions, Govern, Map, Measure, and Manage, give you the specific mechanisms for identifying AI systems, assessing their risks, putting controls in place, and monitoring those controls over time.

ISO 42001 is the filing cabinet. NIST AI RMF is what you put in the drawers. Without the cabinet, your risk management work has nowhere to live. Without the contents, the cabinet is empty when an assessor opens it.

For Clause 6.2.2 specifically, the NIST AI RMF Map function is where the discovery work lives. It requires organizations to identify all AI systems operating in their environment, including third-party services and employee-adopted tools. Running a thorough Map function before starting the Clause 6.2.2 inventory exercise is the most reliable way to close the gap between the documented inventory and the operating reality.

Shadow AI is a compliance problem because it makes Clause 6.2.2 impossible to satisfy completely. It is also a security problem that governance policies cannot fix.

When employees connect third-party AI APIs to internal systems without IT review, they create access points that security teams are not monitoring. When sensitive data flows into an external AI service that was not procured through the standard process, it leaves the controlled environment through a channel that most Data Loss Prevention tools were not designed to catch.

Data egress is the transfer of data from a controlled internal environment to an external location. AI tools create new egress paths that most security architectures do not account for, particularly when employees access consumer AI products on devices that connect to internal systems and data stores.

The security exposure and the compliance gap have the same root cause: the organization does not have visibility into its full AI operating environment. Solving the Clause 6.2.2 inventory problem solves both. An organization that completes a thorough technical discovery of its AI systems does not just close a compliance gap. It also maps the unauthorized access points and unmonitored integrations that create security exposure.

Our approach to ISO 42001 readiness treats the discovery phase as a security exercise first and a compliance exercise second. The Clause 6.2.2 inventory is the output of a technical discovery process, not a survey. Once the inventory is accurate, the governance work is tractable. Before that, it is not.

If you are evaluating ISO 42001 implementation or have one underway, Clause 6.2.2 is the right place to start the readiness assessment. The question is not whether your governance documentation is complete. The question is whether your inventory is accurate. InterSec's ISO 42001 readiness assessment begins with a technical AI discovery exercise. Schedule a readiness assessment to see where your current inventory stands against Clause 6.2.2 requirements.

Most organizations underestimate the scope problem at the start and discover it during the first internal audit or the external certification assessment.

The pattern is consistent. The governance team builds an inventory based on IT procurement records and stakeholder interviews. The inventory looks complete. The Clause 6.2.2 documentation reflects it. Then the assessor asks whether a technical discovery exercise was performed. It was not. The inventory reflects what people reported, not what was running.

The remediation is not complex once the scope is visible. Discovered tools either get brought into governance scope with the appropriate controls applied, or they get prohibited and removed. Neither outcome is difficult when the inventory is accurate. Both outcomes are impossible when tools are unknown.

The organizations that complete Clause 6.2.2 without findings are the ones that treated the inventory exercise as an active discovery project, not a documentation task. They ran technical scans, reviewed network logs for AI-related traffic, and conducted structured interviews with teams known to adopt tools informally. The result was a complete inventory. The governance work followed from there.

A complete Clause 6.2.2 inventory does not expire. AI systems enter and leave your environment continuously. The NIST AI RMF Monitor function connects directly to the ISO 42001 continuous improvement requirement for exactly this reason. The inventory is a living document, not a one-time project.

ISO 42001 provides the management system structure for AI governance, including policies, audits, and continuous improvement cycles. NIST AI RMF provides the risk management functions — Govern, Map, Measure, and Manage — that define how to identify and control AI risks inside that structure. Together they create a program that is certifiable and operationally effective. For Clause 6.2.2 specifically, the NIST AI RMF Map function is the operational mechanism for producing the complete AI system inventory the clause requires.

ISO 42001 Clause 6.2.2 requires organizations to maintain a full inventory of all AI systems within the scope of the management system. This includes approved enterprise tools, departmental deployments, and any AI services used by employees within scope. Shadow AI — tools deployed without governance oversight — is the most common reason organizations fail this requirement. A survey-based inventory is rarely sufficient. Technical discovery is required to produce an accurate scope.

Shadow AI is the deployment of AI tools and services without organizational approval or governance oversight. It matters for ISO 42001 because Clause 6.2.2 requires a complete AI system inventory. Shadow AI makes that inventory incomplete before the implementation project starts. Beyond the compliance gap, unmanaged AI tools create cybersecurity risks including unauthorized data egress, DLP bypass, and unmonitored API integrations. The Clause 6.2.2 discovery exercise resolves both the compliance gap and the security exposure simultaneously.

When employees deploy AI tools without IT review, they create access points that security teams are not monitoring. Sensitive data entering external AI services through channels that bypass Data Loss Prevention tools constitutes unauthorized data egress. Third-party AI API connections to internal systems create credential exposure and unmonitored access points. These are the same class of unauthorized access problems found in other shadow IT contexts. The difference is that AI tools are accessible to non-technical users, which scales the exposure faster than traditional shadow IT.

Note: This article provides general information about ISO 42001 and NIST AI RMF alignment. It is not legal or certification advice. Consult your compliance or legal team for final interpretation of how these frameworks apply to your specific regulatory obligations